Künstliche Intelligenz in Studium & Lehre

Unsere Fokusthemen

Wichtige Links zu GPT@RUB

Unsere Aktivitäten rund um KI in Studium und Lehre

Rechtsgutachten zu generativer KI im Hochschulkontext

Klarheit zu didaktischen und rechtlichen Fragestellungen bringt unser im Jahr 2023 veröffentlichtes Rechtsgutachten zu generativer KI im Hochschulkontext. Das Gutachten wurde vom Ministerium für Kultur und Wissenschaft des Landes Nordrhein-Westfalen in Auftrag gegeben und im Rahmen des von uns koordinierten Projekts KI:edu.nrw erstellt.

Gutachten zum Datenschutzrahmen für die Lerndatenanalyse

Ein kritischer Erfolgsfaktor für die Umsetzung von Learning Analytics an Hochschulen ist ein reflektierter und korrekter Umgang mit Datenschutzfragen und die Einhaltung rechtlicher Vorgaben. Die Erstellung des Gutachtens wurde gefördert vom Land Nordrhein-Westfalen im Rahmen unseres Projekts KI:edu.nrw. Die Federführung hatte das Datenschutzteam der Ruhr-Universität Bochum und des Projekts, Dr. Kai-Uwe Loser und Christopher Lentzsch, die die umfassende rechtliche Bewertung durch die Datenrecht Beratungsgesellschaft koordinierten.

Tools wie ChatGPT unterstützen beim Verfassen, Analysieren, Strukturieren oder Zusammenfassen von Texten, erzeugen Programmiercode, prüfen Rechtschreibung und Grammatik, übersetzen Texte in andere Sprachen, erzeugen Bildmaterial und können bei kreativen Aufgaben und bei der Ideenfindung helfen. Die Chatverläufe können vorgehalten oder auch exportiert werden.

Mit GPT@RUB stellt die RUB nun allen Studierenden, Lehrenden und Bediensteten der RUB eine datenschutzfreundliche Möglichkeit zur Verfügung, ChatGPT und darüber hinaus Open-Source-Sprachmodelle kostenfrei zu nutzen. Denn: wenn zum Beispiel Studierende den Umgang mit den Tools im Studium lernen sollen, brauchen sie einen verlässlichen Zugang.

Generative Technologien lassen sich in Lehre, Studium, Forschung und Verwaltung vielfältig und sinnvoll einsetzen. Allerdings stellen sich auch viele Fragen im Zusammenhang mit dem Einsatz: Was können Hochschulangehörige mit GPT@RUB machen? Was ist prüfungsrechtlich erlaubt? Was nicht?

Das ZfW bietet zu GPT@RUB eine Vielzahl an Informations- und Schulungsangeboten und bei Fragen individuelle Beratung an.

FAQ: Generative Künstliche Intelligenz an der RUB

Letzte Aktualisierung (last update): 09.04.2024

Grundlagenfragen

Einige Anbieter für Plagiatserkennungssoftware werben damit, dass ihre Programme KI-generierten Text erkennen können. Erfahrungsberichte und eine Studie zeigen, dass das nur unzureichend gelingt. Zudem wird als Ergebnis der Analyse zwar eine Wahrscheinlichkeit angegeben (zum Beispiel: „Dieser Text wurde mit einer Wahrscheinlichkeit von XX% KI-unterstützt erstellt“), es besteht dann aber immer noch eine Nachweispflicht auf Seiten der Lehrenden. In einem Rechtsstreit in Bayern über einen Täuschungsversuch bei einem Essay im Rahmen eines Zulassungsverfahrens wurde die Analyse durch eine Software nicht als ausreichende Begründung anerkannt. Stattdessen wurde jedoch die Erfahrung der bewertenden Professoren, die durch den Vergleich mit den Texten anderer Studierender auf Unregelmäßigkeiten hinwiesen, als Begründung akzeptiert. Software-Analysen können darüber hinaus den Effekt haben, Misstrauen zwischen Lehrenden und Studierenden zu schüren: Selbst durch geringe Prozentzahlen in der Wahrscheinlichkeit kann suggeriert werden, dass prinzipiell die Möglichkeit besteht, der Text könne von einer KI generiert sein, und die Beurteilung der Lehrenden könnte dadurch beeinflusst werden. Studierende äußern entsprechend die Sorge, ihre selbstgeschriebenen Texte könnten fälschlicherweise als KI-generiert eingestuft werden und fürchten unberechtigte negative Konsequenzen.

Aber auch jenseits von Fragen der Umsetzbarkeit und Genauigkeit erscheint es perspektivisch nicht sinnvoll, Software einzusetzen, um KI-generierten Text aufzudecken: Wenn textgenerierende KI-Anwendungen produktiv genutzt und bald auch in gängige Textverarbeitungsprogramme integriert werden – wie es zum Teil bereits der Fall ist – dann kann sie Teil der alltäglichen Textproduktion werden, sodass hybride Texte entstehen und die Frage, ob der Text von einem Menschen oder einer KI stammt, (bei angemessener Transparenz und in angemessenem Kontext) nicht mehr kritisch zu bewerten ist.

Zum Thema Plagiate und Plagiatsprävention finden Sie hier weitere Informationen.

Didaktische Fragen (mit rechtlichem Bezug)

Ein vollständiges Verbot generativer KI erscheint im Allgemeinen nicht umsetzbar und auch nicht sinnvoll, wenn KI-Anwendungen Teil des professionellen Handelns in Wissenschaft und anderen Berufsfeldern werden sollen. Entsprechend sollte auch während des Studiums ein reflektierter Umgang mit solchen Tools thematisiert werden.

Das bedeutet aber nicht, dass es nicht Situationen und Kontexte geben kann, in denen die Nutzung untersagt wird. Beides schließt die Nutzung oder das Verbot von KI-Tools für das Verfassen von Hausarbeiten, Abschlussarbeiten oder anderen vergleichbaren, schriftlichen Prüfungsformaten ein. Bei der Entscheidung müssen sich Lehrende überlegen, was das Lernziel der Veranstaltung ist und wie dieses – mit oder ohne KI-Anwendungen – erreicht werden kann, und ob zum Beispiel der reflektierte Einsatz oder die Bewertung KI-erzeugter Texte zu den Lernzielen der Veranstaltung gehört. Ist die Nutzung von KI-Anwendungen für bestimmte Aufgaben untersagt, sollte dies eindeutig, etwa in den Hinweisen für die Erstellung der Arbeit transparent gemacht werden – so, wie dies auch für andere erlaubte oder nicht erlaubte Hilfsmittel üblich ist. Zwischen den sonstigen Hilfsmitteln und generativer KI besteht in formaler Hinsicht kein Unterschied.

Da das Erkennen KI-generierter Texte in Zukunft nur schwer möglich sein wird (siehe: „Kann man KI-generierte Texte erkennen?“), sollten mit Blick auf Prävention wissenschaftlichen Fehlverhaltens die Maßnahmen genutzt werden, die auch vor generativer KI hierfür empfohlen wurden. Dazu zählen Transparenz hinsichtlich der Zulässigkeit von Hilfsmitteln, das Vermitteln von Werten guter wissenschaftlicher Praxis und akademischer Integrität sowie Übungsmöglichkeiten, bei denen Studierende lernen können, wie der produktive Einsatz solcher Tools aussehen kann und wie sie die Nutzung kennzeichnen sollten.

Für die sinnvolle Integration von KI-Anwendungen sollten sich Lehrende zunächst die Frage stellen, was die Lernziele ihrer Veranstaltung sind. Davon ausgehend können sie überlegen, ob KI-Anwendungen sinnvoll zum Einsatz kommen und vielleicht sogar selbst Lerngegenstand sein können. Hilfreich kann eine Reflexion in Form einer Gegenüberstellung sein: Was lernt ein*e Studierende*r, wenn sie ohne Hilfsmittel die Aufgabe löst? Was lernt der*die Studierende bzw. wie verändern sich die Lernziele, wenn Studierende eine KI-Anwendung zur Unterstützung nutzen? Studierende können Lehrende auch dazu anregen, KI-Anwendungen in einer Lehrveranstaltung zu thematisieren und diese gemeinsam auszuprobieren.

In jedem Fall sollte für den*die Studierende transparent sein, warum KI-Anwendungen in der Lehrveranstaltung oder der zugehörigen Prüfung gegebenenfalls erlaubt oder nicht erlaubt sind. Zudem ist es wichtig, Regeln aufzustellen, die durchaus situativ und kontextgebunden sein können (etwa: In dieser Sitzung verzichten wir auf Hilfsmittel, weil…; oder: Wenn Sie generative KI für diese Aufgabe nutzen, kennzeichnen Sie es bitte auf diese Weise: …).

Da die Technologie für alle Beteiligten neu ist, müssen Regeln – wie beispielsweise Kennzeichnungspflichten – noch ausgehandelt werden. Dies ist aber auch eine Chance für Lehrende und Studierende, in den Austausch zu kommen und sich über die Anforderungen und Erwartungen in einer Lehrveranstaltung zu verständigen. Es empfiehlt sich, dass sich Fakultäten auf einheitliche Regeln für alle Studiengänge der Fakultät oder auch studiengangsbezogen verständigen. Das Zentrum für Wissenschaftsdidaktik berät Lehrende gern zu Fragen bezüglich der didaktischen Gestaltung von Lehrveranstaltungen und das Dezernat 1 zu der Festlegung von Regeln.

Rechtliche Fragen (mit didaktischem Bezug)

Für die RUB gehen wir davon aus, dass prüfungsrechtliche Vorschriften mit Blick auf generative KI nicht oder nicht umfangreich angepasst werden müssen. Eigenständigkeitserklärungen sehen in der Regel schon heute vor, dass alle Hilfsmittel anzugeben sind. Dies gilt auch für generative KI. Empfehlenswert ist allerdings eine entsprechende Präzisierung in den Hinweisen, die Studierende zu den Prüfungen über erlaubte und unerlaubte Hilfsmittel erhalten.

Darüber hinaus muss vor dem Hintergrund der Lernziele in den einzelnen Lehrveranstaltungen entschieden werden, ob und in welcher Weise der Einsatz generativer KI zulässig ist. Sofern dies für die Prüfungen relevant ist, muss den Studierenden die Regelung zu Beginn der Veranstaltung mit den anderen Informationen zur Prüfung mitgeteilt werden. Sie ist dann auch für die Lehrenden bindend.

Falls Lehrende ergänzende, mündliche Prüfungen zu schriftlichen Aufgaben planen, muss die Prüfungsordnung dies zulassen und dies entsprechend in der Modulbeschreibung ausgewiesen werden.

Hier gilt die klassische Regelung für Täuschungen: „Versucht die Kandidatin oder der Kandidat das Ergebnis einer Prüfungsleistung oder Studienleistung durch Täuschung oder Benutzung nicht zugelassener Hilfsmittel zu beeinflussen, gilt die betreffende Prüfungsleistung oder Studienleistung als mit „nicht ausreichend" (5,0) bewertet. Die Feststellung wird von der jeweiligen Prüferin oder von dem jeweiligen Prüfer oder von der oder dem Aufsichtführenden aktenkundig gemacht. Die Bewertung erfolgt durch den Prüfungsausschuss. Im Falle eines mehrfachen oder sonstigen schwerwiegenden Täuschungsversuches kann die Kandidatin bzw. der Kandidat nach zuvor erfolgter Anhörung von der Erbringung weiterer Prüfungsleistungen ausgeschlossen und exmatrikuliert werden.

Konkret bedeutet dies, dass Sie als Prüfer*in entscheiden müssen, ob der*die Studierende KI als nicht zulässiges Hilfsmittel genutzt hat bzw. die Nutzung nicht kenntlich gemacht hat. Dies ist abhängig von den Hilfsmitteln, die Sie als zulässig für diese Prüfung ausgewiesen haben. Die formale Entscheidung, ob ein Täuschungsversuch vorliegt, trifft der Prüfungsausschuss.

Prüfungsleistungen, die über stark vorstrukturierte Formen wie z. B. Multiple Choice-Klausuren hinausgehen (also z. B. Abschlussarbeiten), sind urheberrechtlich geschützt. Werden die als Prompt eingegebenen Daten – wie im Fall von ChatGPT – gespeichert und weiterverwendet, ist die Eingabe einer Prüfungsleistung eine nicht zulässige Vervielfältigung und deshalb eine Urheberrechtsverletzung.

Aus prüfungsrechtlicher Perspektive darf darüber hinaus eine Bewertung nur durch die Prüfer*innen vorgenommen werden, nicht durch eine Software. Es ist deswegen aus prüfungsrechtlicher Sicht zwar möglich, KI-Anwendungen als Hilfsmittel im Bewertungsprozess zu nutzen. Das Ergebnis muss aber kritisch geprüft werden und auch die Festlegung der Note kann ausschließlich durch den für die Bewertung zuständigen Menschen erfolgen.

Unterstützung

An der RUB erhalten Sie sowohl Unterstützung bei didaktischen als auch bei prüfungsrechtlichen Fragen.

Viele der Fragen, die sich durch KI-Anwendungen stellen, berühren didaktische Grundlagen, die auch vor der Veröffentlichung von ChatGPT schon relevant waren, zum Beispiel: Wie können Studierende das wissenschaftliche Schreiben lernen? Wie verhindere ich Plagiate? Wie gestalte ich ein Seminar, um die intendierten Lernziele zu erreichen? Zu diesen und ähnlichen Fragen berät Lehrende gerne das Zentrum für Wissenschaftsdidaktik.

Studierende laden wir herzlich ein, sich vom Schreibzentrum der RUB beraten zu lassen.

Andere Fragen betreffen rechtliche Aspekte, also zum Beispiel: Wie kann ich den Einsatz von generativer KI in meiner Veranstaltung rechtssicher regeln? Wie muss ich die Änderung einer Prüfungsform prüfungsrechtlich absichern? Zu derartigen Fragen berät Sie die Abteilung 1 im Dezernat 1 der Verwaltung.

Kommen Sie mit Ihren Anliegen gerne auf uns zu!

English FAQ on Generative AI

What is generative AI?

Generative AI refers to technical systems that are able to generate content – e.g. texts, images, music or videos. The best-known example at present is ChatGPT: in response to an input (a so-called ‘prompt’), suitable text segments are generated in natural (human-like) language. Artificial Intelligence (AI) is the established term for models and systems developed with the help of various machine learning methods. These models are ‘trained’ using large amounts of data to generate content based on probabilities.

Are AI-generated texts factually correct?

Large Language Models – like ChatGPT – are primarily probability distributions: They calculate which word is likely to follow another in a given context. As a result, the generated output is not always factually correct. For very general and well-known facts, the generated answers are usually valid, but once it becomes more specific, false content is generated more frequently (so-called ‘data hallucinations’). False answers, however, are presented in the same linguistically plausible form, making a distinction not possible at first glance and a critical examination is necessary.

Is it possible to detect AI-generated texts – for example with the help of special software?

Some providers of plagiarism detection software advertise that their programmes can detect AI-generated texts. User experience and a study show that this is only insufficiently successful. Additionally, the results of the analysis are presented as a probability (e.g.: “There is a probability of XX % that this text was AI-generated”), so teachers would still be obligated to prove the use of AI, which is difficult. In a court ruling by the Technical University of Munich regarding an alleged cheating attempt concerning an essay written for an admission procedure, the software analysis was not recognised as sufficient justification. However, the experience of the evaluating professors, who pointed out irregularities by comparing the texts with those of other students, was accepted as justification. AI detection analyses can also have the effect of fuelling mistrust between teachers and students: Even low percentages in the probability analysis may suggest the possibility that the text could have been generated by an AI, thus possibly influencing the teacher’s assessment. Students express concern that the text they wrote themselves could be wrongly classified as AI-generated and fear unwarranted negative consequences.

Even beyond questions of feasibility and accuracy, it does not seem to make sense perspectively to use software to detect AI-generated texts: If text-generating AI applications are used sensibly and if they soon also become integrated into common text processing software – which is already happening – it might become part of everyday text production, paving the way for hybrid texts and making the question of whether the text originates from a human or an AI irrelevant (given appropriate transparency and the right context).

Should the use of ChatGPT and other such tools be prohibited?

A complete ban on generative AI does not seem feasible in general and does not make sense at all if AI applications will become part of a professional conduct in science and other fields. Accordingly, students should be guided in learning how to use it and how to work with it productively.

This does not mean, however, that there cannot be situations and contexts in which the use is prohibited. Both include the use or prohibition of AI tools for writing assignments, theses or other comparable written examination formats. When deciding whether to allow the use of these tools or not, teachers need to consider the intended learning outcomes of their courses and how these can be achieved – with or without AI applications – and whether, for example, reflecting the use or critically revising AI-generated texts is part of the intended learning outcomes. If the use of AI applications for certain assignments is prohibited, it should be made transparent, e.g. in the instructions for the preparation of the assignment or similar – just like the permission or prohibition for other aids in their respective subjects is issued. There is no formal difference between other tools and generative AI.

Since it will be difficult to recognise AI-generated texts in the future (cf. “Is it possible to detect AI- generated texts?”), the measures that were recommended for preventing academic misconduct in the past can be used in connection to AI as well. These include transparency regarding the permissibility of aids and tools, teaching values of good scientific practice and academic integrity, as well as practical opportunities for students to learn what the productive use of such tools can look like and how the use should be indicated.

How can tools like ChatGPT be integrated into courses?

For a sensible integration of AI applications, teachers need to set learning outcomes for their courses. Based on this, they can consider whether AI applications can be used in a reasonable way (or perhaps even be a learning object themselves). A reflection in the form of a comparison can be helpful: What does a student learn when they solve the assignment without tools? What do they learn and how do intended learning outcomes change when they use an AI application as support? Students can encourage teachers to address the use of generative AI tools in their courses and try them out together.

In any case, it should be made transparent in class why generative AI tools are allowed or not in a course or for an exam. In addition, it is important to establish rules, even if they may change for certain situations and contexts (for example: In this session we will work without assistive technology because...; or: If you use generative AI for this task, please mark it this way: ...).

Since the technology is new for all parties involved, rules – such as labelling requirements – still need to be negotiated. However, this is also an opportunity for students and teachers to enter a dialogue and to discuss the requirements and expectations in a course. It is recommended that faculties agree on standardized regulations for all degree programs in the faculty or for specific degree programs. The Centre for Teaching and Learning (ZfW) is happy to advise lecturers on questions regarding the didactic design of courses and Department 1 on the establishment of regulations.

Does it still make sense to hand out writing assignments?

Yes, it does! Writing has multiple functions (not only) in academia. Some of them are more text-centered (e.g. researchers make their results available via texts and use them to communicate in research discourse), some of them more process-oriented (e.g. when writing is used as a thinking, structuring or learning aid). Through reading and writing texts, people do not only learn about content, but also about thought and argumentation structures of their subject. It is important to make these benefits – and everything else that happens during writing – visible to enable writers to make decisions about whether they prefer writing on their own or using AI applications to assist them. The RUB’s Writing Centre offers support on issues related to writing in studying and teaching.

Is it a good idea to use generative AI tools?

This is a question that has no universal answer. Whether the use of generative AI makes sense depends on many factors that need to be weighed up against each other. AI applications can be used to promote the learning process, but they can also prevent learning from taking place if they are used to outsource certain cognitive tasks. It helps to ask some questions on why you want to use generative AI: Because it saves time? Because the generated content is better than what you could write yourself? Because it helps you to delve deeper into your own text and the content of a topic? You should get to the bottom of these questions before deciding for or against the use of AI applications. The RUB Writing Centre is happy to advise all of RUB’s writers when they have difficulties regarding their writing process.

Is the use of generative AI permitted at RUB?

Yes: Generative AI tools can be used at RUB in research, teaching and studies. However, their use can be restricted in certain contexts (e.g. written examinations) or linked to labelling requirements. In this respect, generative AI tools are no different from other tools. This means that the rules and requirements for the use of AI tools must be clarified in the instructions for the preparation of a term paper (together with other permissible or non-permissible aids). Additionally, there needs to be an explanation on how students must clarify the use in the declaration of autonomy, e.g. “I used ChatGPT exclusively for generating the outline of my term paper.” or “I used ChatGPT for revising my term paper.” In this respect, too, the use of AI tools is no different from other tools.

Who is the author of an AI-generated text?

Generally, neither the developers who trained the model nor the model itself has authorship of AI-generated content. However, authorship can lie with the users if they continue to work with the generated content and it thus becomes a product of the users’ own intellectual work. However, it is a case-by-case decision whether authorship can be attributed or not.

Are there labelling obligations for AI-generated content?

One of the basic values in academia is that processes of knowledge-making should be as transparent as possible. However, conventions for labelling AI-generated content have not yet been developed. From a legal point of view, two aspects in particular must be taken into account for examinations. Firstly, every examination regulation stipulates for written examinations that the permitted aids are announced at the beginning of the semester in which the module takes place or together with the assignment. If the use of an AI application is permitted in this context, the student confirms with the submission of the written paper not only that the work was written independently, but also that no sources and aids (in this case, including the use of AI tools) other than those specified were used and that citations were marked. Secondly: If regulations about permitted/non-permitted aids, issued to students with topics or at the beginning of a semester, state an obligation for labelling, it needs to be complied with.

Do new regulations have to be made and existing ones changed?

Concerning RUB, we assume that examination regulations with regard to generative AI do not have to be adapted extensively. As a rule, declarations of independence already stipulate that all auxiliary materials must be stated. This also applies to generative AI. However, it would be advisable to specify this in the information that students receive about permitted and non-permitted aids in examinations. Furthermore, it must be decided individually regarding the intended learning outcomes in courses whether and in what way the use of generative AI is permissible. If it is relevant for the examinations, the regulation must be communicated to the students at the beginning of the course together with other information on the examination. It is then also binding for the teachers.

If lecturers are planning oral examinations in addition to written assignments, examination regulations must permit this and it must be indicated accordingly in the module description.

Can people be obliged to use tools such as ChatGPT?

If the use of AI tools is to be mandatory, the terms of use of the respective software must be observed. In particular, it depends on how the user's data is handled. This can vary greatly depending on which software or platform is used. If no data protection-compliant solution can be provided, use must be viewed critically and may only be voluntary.

As a teacher, am I allowed to use ChatGPT to grade exams?

Examinations that go beyond highly pre-structured forms such as multiple choice examinations (e.g. theses) are protected by copyright. If the data entered as a prompt – as in the case of ChatGPT – is saved and reused, entering the text of a written exam is an impermissible reproduction and therefore a copyright infringement.

From the perspective of examination law, assessment may only be carried out by the examiners, not by software. Therefore, from the perspective of examination law, it is possible to use AI applications as an aid in the assessment process. However, the result must be critically examined and the grade can only be determined by the person responsible for the assessment.

What happens if there is a case where it is suspected that generative AI was used illegitimately?

The standard regulation for cheating applies here: If the candidate attempts to influence the result of an examination or course work by cheating or using unauthorised aids, the examination or course work in question is assessed as “insufficient” (5.0). The respective examiner or the invigilator shall make a record of the assessment. The examination board will review the case. In case of multiple or other serious attempts at cheating, the candidate can be excluded from taking further examinations and exmatriculated after a prior hearing.

In concrete terms, this means that the examiner must decide whether the student has used AI tools as an inadmissible aid or has not made the use clear. This depends on the aids that have been designated as permissible for this examination. The formal decision as to whether an attempt at cheating has been conducted is made by the examination board.

Help, I feel overwhelmed. Where can I get support on the topic of ChatGPT & Co?

At RUB you will receive support concerning both didactic and examination law issues. Many of the questions regarding teaching & learning that AI applications raise touch on basics that were relevant before the release of ChatGPT, as well, e.g.: How can students learn academic writing? How do I prevent plagiarism? How do I design my seminar to achieve the intended learning outcomes? The Zentrum für Wissenschaftsdidaktik (Centre for Teaching and Learning) will be happy to help you on these and similar questions.

We happily invite students to seek advice from the RUB Writing Centre.

Other questions concern legal aspects, for example: How can I regulate the use of generative AI in my course in a legally secure way? How do I have to secure the change of an examination form in terms of examination law? Section 1 in Department 1 of the Administration will advise you on such questions.

Please feel free to contact us with your concerns!

Unsere nächsten Veranstaltungen zum Thema KI

April 2026

KI:edu.nrw-Themenreihe: Ist das „KI“ oder kann das weg? Warum wir differenzierter über „KI“ sprechen sollten

Moderation

-

KI:edu.nrw

-

Webseite

https://learning-aid.blogs.ruhr-uni-bochum.de/

Workshop

„Künstliche Intelligenz“ ist eins der großen Buzzwords unserer Zeit: „KI“ als Werkzeug für die Bildung, „KI“ als Rettung für das Klima, „KI“ als Schlüsseltechnologie dieses Jahrhunderts. Dabei ist oft gar nicht klar, was mit „KI“ eigentlich gemeint ist – ein Chatbot auf Basis eines großen Sprachmodells, ein Roboter, der einfache motorische Befehle ausführen kann, oder der Algorithmus einer Suchmaschine…? Auch in der Hochschulkontext leiden Diskussionen um „KI“ oft darunter, dass nicht ganz klar ist, was überhaupt gemeint ist. Deshalb haben wir uns auch im Projekt KI:edu.nrw über dieses Thema ausgetauscht. So entstand eine projektinterne Arbeitsgruppe, die sich mit dieser Frage auseinandersetzt.

Dieser Workshop soll dafür sensibleren, wie wir über „KI“ sprechen. Wir wollen aus ethischer, linguistischer, technischer und praktischer Perspektive aufzeigen, warum es sich oft lohnt, den Ausdruck „KI“ in den eigenen Aussagen durch Alternativen zu ersetzen. In einer anschließenden Arbeitsphase experimentieren die Teilnehmenden damit, alternative Ausdrücke für ihre eigenen Kontexte zu finden.

Lernziele

- Nach dem Workshop sind die Teilnehmenden in der Lage,

aus verschiedenen Perspektiven zu erörtern, warum die Verwendung des Ausdrucks „Künstliche Intelligenz“ oder „KI“ problematisch sein kann und - angemessene alternative Ausdrücke für ihre Aussagen zu finden.

Zielgruppe: Alle Interessierten von Mitgliedshochschulen der DH.nrw

Referentinnen: Nadine Lordick, Selina Müller, Laura Platte

Eine Anmeldung ist bis zum 23.04.2026 möglich.

Veranstaltet wird die Themenreihe vom Projekt KI:edu.nrw.

Eine Übersicht über alle Themen und Termine finden Sie hier: https://ki-edu-nrw.rub.de/themenreihe2026

KI-Impulse: NoteBookLM – Auf Basis von eigenen Dokumenten Inhalte erstellen und neu gestalten

NoteBookLM ist eine KI-Anwendung von Google, die es erlaubt auf der Basis von Dokumenten umfangreiche Inhalte zu verarbeiten und neu zu gestalten. Es ist möglich, mit Dokumenten zu chatten und Podcasts, Infografiken oder Mindmaps und mehr zu erstellen.

Wir zeigen, wie es funktioniert und welche Einsatzmöglichkeiten es mit NotBookLM in der Lehre gibt.

Bilder und Videos mit Hilfe von KI erstellen: Ein Blick auf Tools und ihre Möglichkeiten

Moderation

-

Kathrin Braungardt

Zahlreiche Tools ermöglichen es, mit Hilfe von KI-Technologie Bilder und Videos zu erzeugen. Anstelle diese selbst zu machen, könnte es zur Alltagspraxis werden, Bilder und Videos erstellen zu lassen. Die Veranstaltung gibt einen Überblick über verschiedene Tools, Techniken und Werkzeuge, und demonstriert, wie diese arbeiten, wo sie nützlich sein können und wo ggf. auch offene Fragen, Probleme und Fallstricke liegen.

Ist KI etwa nicht neutral? Wie mit KI und Bias umgehen?

Moderation

-

Kathrin Braungardt

Die Veranstaltung führt in das Thema Bias und KI ein. Bias liegt vor, wenn in KI-Systemen systematisch verzerrte Ergebnisse entstehen, die zum Beispiel Stereotype bzw. unfaire oder diskriminierende Elemente enthalten. Dahinter steckt, dass nicht repräsentative Trainingsdaten KI-Systeme anfällig für Bias machen. Anhand von Beispielen werden Bias-Problematiken in KI-Systemen dargestellt und erläutert. Außerdem soll es darum gehen, wie Bias vermieden bzw. reduziert werden kann.

Recht & KI: Was gilt und was sind Folgen für die Praxis?

Moderation

-

Kathrin Braungardt

Inhalte, die mit Hilfe von KI erzeugt werden, stellen das Recht vor neue Herausforderungen. In der Veranstaltung wird auf die rechtlichen Voraussetzungen bzw. auf relevante rechtliche Fragen bei der Nutzung von Inhalten, die mittels KI-Tools erzeugt werden, eingegangen. Anhand aktueller Beispiele werden rechtliche Fragestellungen erläutert und Hinweise und Tipps für die praktische Anwendung gegeben. Folgende Themen werden behandelt:

– Urheberrechtliche Fragen

– Regeln guter wissenschaftlicher Praxis / Plagiat

– Prüfungsrechtliche Aspekte

– Datenschutzrecht

– KI-Verordnung

– Ethische Leitlinien

Mai 2026

KI:edu.nrw-Themenreihe: ChatGPT, mach mal – wie Sie KI möglichst unreflektiert nutzen

Moderation

-

KI:edu.nrw

-

Webseite

https://learning-aid.blogs.ruhr-uni-bochum.de/

Workshop

„KI darf genutzt werden, aber die Nutzung soll kritisch und reflektiert erfolgen.“ Forderungen dieser Art finden sich in allerlei Handreichungen, Richtlinien und Positionspapieren. Aber was bedeutet das überhaupt? In diesem Workshop werden wir versuchen, uns dieser Frage anzunähern, indem wir das Ganze auf den Kopf stellen: Wie sieht eigentlich eine völlig unkritische und unreflektierte Nutzung generativer Modelle wie ChatGPT, Gemini & Co. aus?

Und was bedeutet das für eine sinnvolle Nutzung?

Dieser Workshop richtet sich an alle Hochschulangehörigen, die über KI-Nutzung nachdenken und sich konstruktiv & kritisch damit auseinandersetzen wollen.

Zielgruppe: Alle Interessierten von Mitgliedshochschulen der DH.nrw

Referentin: Nadine Lordick aus dem KI:edu.nrw-Teilprojekt Generative Künstliche Intelligenz

Eine Anmeldung ist bis zum 03.05.2026 möglich..

Veranstaltet wird die Themenreihe vom Projekt KI:edu.nrw.

Eine Übersicht über alle Themen und Termine finden Sie hier: https://ki-edu-nrw.rub.de/themenreihe2026

KI-Impulse: Drehbücher mit KI gestalten

Moderation

-

Sabine Römer

-

E-Mail

sabine.roemer@rub.de

Wo?

- Online

Für wen?

Wie lassen sich mit KI schnell und effektiv Drehbücher für Lehrvideos entwickeln? In dieser Veranstaltung zeigen wir, wie KI-Tools Sie als Lehrende bei der Strukturierung von Inhalten, der Formulierung von Sprechertexten und der Planung einzelner Szenen unterstützen können. Anhand konkreter Beispiele erfahren Sie, wie aus einer ersten Idee Schritt für Schritt ein Videoskript entsteht. Zudem erhalten Sie praktische Tipps, wie KI-generierte Skripte gezielt für kurze Lehrvideos oder Erklärsequenzen in der Hochschullehre eingesetzt werden können.

KI:edu.nrw-Themenreihe: Kritisches Denken und generative KI – ethische Überlegungen zum akademischen Schreiben

Moderation

-

KI:edu.nrw

-

Webseite

https://learning-aid.blogs.ruhr-uni-bochum.de/

Vortrag

Der Vortrag untersucht kritisches Denken und reflektierende Schreibprozesse im akademischen Kontext. Im Zentrum steht die Frage, wie die Nutzung von generativen KI-Tools – etwa ChatGPT oder Microsoft Word Copilot – den Schreib- und Denkprozess verändern und prägen. Es wird gezeigt, inwiefern die gängige Sorge vor einer Unterminierung des kritischen Denkens und reflektierenden Schreibens durchaus berechtig ist. Statt KI-Nutzung pauschal abzulehnen wird aber auch gezeigt, dass die tatsächlichen Auswirkungen sehr stark davon abhängen, wie diese Tools jeweils die Nutzerinteraktion gestalten. Entsprechend sollte die bewusste und kritische Wahl der Schreibtools heute zum akademischen Schreiben dazugehören.

Zielgruppe: Studierende und Lehrende sowie Hochschuldidaktiker*innen an Mitgliedshochschulen der DH.nrw

Referent: Prof. Dr. Sebastian Weydner-Volkmann aus dem Teilprojekt Ethik

Eine Anmeldung ist bis unmittelbar vor Veranstaltungsbeginn möglich.

Veranstaltet wird die Themenreihe vom Projekt KI:edu.nrw.

Eine Übersicht über alle Themen und Termine finden Sie hier: https://ki-edu-nrw.rub.de/themenreihe2026

Referate mal anders gestalten mit kreativen Tools und KI

Moderation

-

eTeam Digitalisierung

-

E-Mail

zfw-eteamdigi@rub.de

Wo?

- Online

Für wen?

Habt ihr keine Lust mehr auf langweilige Referate im gleichen Stile? Wünscht ihr euch variantenreichere Präsentationen und ansprechendere Vorträge? Dann seid ihr in diesem Workshop genau richtig! Wir stellen euch verschiedene Möglichkeiten vor, wie ihr eure Referate kreativ und spannend gestaltet. Dabei gehen wir mit euch den Weg durch das Referat und stellen euch verschiedene Präsentationstools, interaktive Elemente und neue Präsentationsformen vor. Außerdem zeigen wir euch verschiedene KI-Tools, die euch einerseits bei der Erstellung eurer Präsentation unterstützen können oder ihr den letzten Schliff geben.

Elisa vom eTeam Digitalisierung freut sich über eure Anmeldungen!

Vorab noch Fragen? Dann stellt sie uns gerne unter zfw-eteamdigi@rub.de

KI:edu.nrw-Themenreihe: „Wert mir mal die Tabelle aus“ – Möglichkeiten und Grenzen der Datenanalyse mit Hilfe generativer KI in Studium und Lehre

Moderation

-

KI:edu.nrw

-

Webseite

https://learning-aid.blogs.ruhr-uni-bochum.de/

Workshop

Generative KI-Modelle bieten vermeintlich die Möglichkeit, binnen Sekunden große Mengen Daten zu analysieren. Die blinde Eingabe von Datensätzen in KI-Chatbots mag verlockend sein, doch die damit verbundenen Fehlerquellen und Halluzinationen können leicht zu verfälschten Ergebnissen führen – besonders bei großen und unbekannten Datenmengen.

In diesem Workshop tauchen wir ein in die Welt der Datenanalyse mit generativen Sprachmodellen und lernen, ihre Stärken und Schwächen realistisch einzuschätzen. Gemeinsam identifizieren wir Fehlerquellen in der Datenverarbeitung und entwickeln Strategien, um diese zu vermeiden. Dabei geht es nicht nur um technisches Know-how, sondern auch darum, ein kritisches Bewusstsein für die Grenzen und Möglichkeiten von KI in der Datenanalyse zu entwickeln.

Lernziele

- Grundlegende Eigenschaften generativer Sprachmodelle realistisch einschätzen

- Fehlerquellen im Prozess der Datenverarbeitung durch generative Sprachmodelle identifizieren

- Ideen für geeignete Workflows zur Identifikation und Vermeidung von Fehlern entwickeln

Zielgruppe: Alle Interessierten von Mitgliedshochschulen der DH.nrw

Referent: Robert Queckenberg

Eine Anmeldung ist bis unmittelbar vor Veranstaltungsbeginn möglich.

Veranstaltet wird die Themenreihe vom Projekt KI:edu.nrw.

Eine Übersicht über alle Themen und Termine finden Sie hier: https://ki-edu-nrw.rub.de/themenreihe2026

KI-Impulse: KI und Nachhaltigkeit, ein Widerspruch?

KI-Anwendungen gelten als ressourcenhungrig, d.h. sie verbrauchen eine Menge an Strom und Wasser. Von welchen Größenordnungen sprechen wir? Ist der hohe Ressourcenverbrauch tatsächlich unausweichlich und sollten daraus Konsequenzen bei der alltäglichen Anwendung von KI-Technologien gezogen werden?

Wir beleuchten die Faktenlage zum Ressourcenverbrauch von KI-Technologien und stellen verschiedene Handlungsmöglichkeiten vor.

Juni 2026

Testfragen mit KI generieren

Moderation

-

Sabine Römer

-

E-Mail

sabine.roemer@rub.de

In dieser Einführung erfahren Sie, wie Sie mit Hilfe von KI effizient Testfragen für Ihre Lehre erstellen können. Anhand praktischer Beispiele zeigen wir, wie aus Skripten, Präsentationen oder Video-Transkriptionen Aufgaben entstehen können – von Multiple Choice über Zuordnungen bis hin zu Lückentexten. Außerdem erhalten Sie einen Überblick, wie die generierten Fragen in Moodle und H5P umgesetzt werden können.

KI:edu.nrw-Themenreihe: Architektur und Schnittstellen von Multi-Agenten-Systemen (MAS) im Bildungsbereich

Moderation

-

KI:edu.nrw

-

Webseite

https://learning-aid.blogs.ruhr-uni-bochum.de/

Workshop

Über LLMs hinaus bieten Multi-Agenten-Systeme (MAS) Potenzial für personalisierte, skalierbare Bildungsanwendungen – von adaptiven Lernsystemen über automatisierte Feedback-Prozesse bis hin zu intelligenten Tutoring-Systemen. Doch wie konzipiert man solche Systeme didaktisch sinnvoll?

Dieser Workshop vermittelt praktisches Wissen über MAS-Architekturen: Teilnehmende lernen Definitionen, Komponenten, Prozesse und Interaktionen zwischen Agents kennen. Anhand konkreter Beispiele aus dem Bildungsbereich werden Erfolgskriterien und Evaluationsmethoden diskutiert.

Im Praxisteil entwerfen Teilnehmende eigene MAS-Architekturen für selbstgewählte Bildungsszenarien. In Kleingruppen werden diese Entwürfe kollegial begutachtet, konstruktiv kritisiert und iterativ verbessert – ein bewährter Design-Prozess, der konkrete Handlungskompetenz vermittelt. Wer möchte, kann den eigenen MAS-Entwurf im Anschluss von Coding-Agents implementieren lassen und so direkt vom Konzept zum funktionsfähigen Prototyp gelangen.

Lernziele

- Definition von MAS kennen

- Komponenten, Prozesse und Interaktionen verstehen

- Bildungs-MAS-Beispiele kennen

- Erfolgskriterien und Evaluationsmethoden kennen

- Eigene MAS-Architekturen entwerfen können

Zielgruppe: Alle Interessierten von Mitgliedshochschulen der DH.nrw

Referent: Niels Seidel, KI:edu.nrw-Praxisprojekt SRL-Agent

Eine Anmeldung ist bis zum 15.05.2026 möglich.

Veranstaltet wird die Themenreihe vom Projekt KI:edu.nrw.

Eine Übersicht über alle Themen und Termine finden Sie hier: https://ki-edu-nrw.rub.de/themenreihe2026

Entscheide dich! Game Based Learning mit KI, Actionbound und ThingLink

Moderation

-

Sabine Römer

-

E-Mail

sabine.roemer@rub.de

Game Based Learning kann ein gutes Mittel sein, um Lerninhalte motivierend zu gestalten. Allerdings fehlen im Arbeitsalltag oft die Zeit und vielleicht auch manchmal die kreativen Ideen, dies in der eigenen Lehre umzusetzen.

In diesem Workshop erhalten Sie einen Einblick in die kreative Nutzung von KI-Tools wie Midjorney und ChatGPT und entwickeln zusammen mit uns Geschichten für interaktive Touren und Geschichten sowie Escape Games. Wir zeigen Ihnen Wege, wie Sie schnell und möglichst unkompliziert Ideen für Lern-Touren und -Spiele generieren und mit den Tools Actionbound und ThingLink sofort erstellen können.

KI:edu.nrw-Themenreihe: Grundlagen einer Ethik der Künstlichen Intelligenz

Moderation

-

KI:edu.nrw

-

Webseite

https://learning-aid.blogs.ruhr-uni-bochum.de/

Vortrag

Es werden einige Grundlagen einer ethischen Analytik von KI-Systemen und ihren Effekten auf die Anwender und die Gesellschaft vorgestellt. Diese basieren auf bestimmten Prämissen gegenüber der Natur und prognostischen Weiterentwicklung von KI – bspw. der Annahme, dass es sich bei KI um eine disruptive Technologie mit gesamtgesellschaftlicher Bedeutung handelt. Anhand der Prämissen werden bestimmte Lösungsansätze vorgeschlagen. Dazu gehört prominent eine Anwendung der Selbstbestimmungstheorie (Self-Determination Theory) der Psychologen Ryan und Deci auf das Thema.

Lernziele

- Notwendige Prämissen für ein ethisches Verständnis von KI

- Mögliche Lösungsansätze aus der Philosophie und Psychologie für Herausforderungen durch KI

Zielgruppe: Alle Interessierten von Mitgliedshochschulen der DH.nrw

Referent: Dominik Bär aus dem KI:edu.nrw-Teilprojekt Ethik

Eine Anmeldung ist bis zum 10.06.2026 möglich.

Veranstaltet wird die Themenreihe vom Projekt KI:edu.nrw.

Eine Übersicht über alle Themen und Termine finden Sie hier: https://ki-edu-nrw.rub.de/themenreihe2026

KI:edu.nrw-Themenreihe: Austauschforum II | Learning Analytics im Studienberatungsprozess von Zentralen Studienberatungen

Moderation

-

KI:edu.nrw

-

Webseite

https://learning-aid.blogs.ruhr-uni-bochum.de/

Austauschforum + Input

Im ersten Austauschforum haben wir uns mit der Frage beschäftigt an welchen Indikatoren, die sich durch Learning Analytics generieren, mögliche Studienprobleme festgemacht werden können. Zudem gab es eine Einführung in die technische Infrastruktur POLARIS, welche verschiedene Quellsysteme wie RWTHonline, Dynexite und Moodle verknüpft und entsprechend die Learning Analytics Daten sammelt.

Die für die RWTH relevanten Indikatoren sind nun festgelegt und mit spezifischen Hypothesen erweitert worden. Derzeit läuft eine Testphase von POLARIS, um diese Hypothesen zu untersuchen und die Indikatoren weiter zu schärfen. In diesem zweiten Austauschforum werden ersten Ergebnisse aus der Testphase präsentiert. Zusätzlich möchten wir das Meinungsbild der Teilnehmenden in Arbeitsgruppen erörtern: Wie nehmen andere Kolleg*innen die Relevanz und Nützlichkeit der aktuellen Indikatoren wahr?

Zielgruppe: Studienberater*innen von Zentralen Studienberatungen an Mitgliedshochschulen der DH.nrw

Referentin: Swenja Schiwatsch aus dem KI:edu.nrw-Teilprojekt Studienberatung

Eine Anmeldung ist bis zum 15.06.2026 möglich.

Veranstaltet wird die Themenreihe vom Projekt KI:edu.nrw.

Eine Übersicht über alle Themen und Termine finden Sie hier: https://ki-edu-nrw.rub.de/themenreihe2026

Lehramtsstudierende aufgepasst: KI-Tools für den Schuleinsatz

Moderation

-

eTeam Digitalisierung

-

E-Mail

zfw-eteamdigi@rub.de

Wo?

- Online

Für wen?

KI-Tools spielen inzwischen in fast allen Lebensbereichen eine Rolle – natürlich auch in der Schule. Unsere Veranstaltung bietet euch einen Überblick über verschiedene Tools für den sinnvollen Einsatz von KI in der Schule. Wir stellen euch verschiedene KI-Tools vor, die Lehrkräfte bei der Unterrichtsplanung, -durchführung und -bewertung unterstützen können. Konkrete Anregungen und Szenarien für den planenden und organisatorischen Einsatz kommen dabei natürlich nicht zu kurz! Außerdem diskutieren wir gemeinsam mögliche Einsatzszenarien, wie man KI-Tools aktiv mit Schüler*innen im Klassenkontext nutzen kann.

Wir freuen uns auf eure Anmeldungen!

Vorab noch Fragen? Dann stellt sie uns gerne unter zfw-eteamdigi@rub.de.

KI:edu.nrw-Themenreihe: “Das machen wir bisher nach Bauchgefühl.” – Ein Praxisbericht zur Entwicklung und Evaluation eines Dashboards zur Aufgabenschwierigkeit

Moderation

-

KI:edu.nrw

-

Webseite

https://learning-aid.blogs.ruhr-uni-bochum.de/

Vortrag

Gibt es Aufgaben in meinen Prüfungen, die schwerer sind, als ich beabsichtige? Schneidet ein bestimmtes Thema oder ein bestimmter Aufgabentyp tatsächlich schlechter ab?

Zur Qualitätssicherung von Prüfungsfragen stehen Lehrenden bislang kaum Werkzeuge zur Verfügung, die prüfungsübergreifende Kennwerte zu einzelnen Aufgaben darstellen. Für das RWTH-ePrüfungssystem Dynexite haben wir daher ein Dashboard entwickelt, das Lehrenden Einblicke in die Schwierigkeit ihrer Aufgaben ermöglicht. Die technische Infrastruktur dafür stellt die Learning-Analytics-Software POLARIS bereit, die im Projekt KI:edu.nrw entwickelt wird.

In diesem Vortrag berichten wir über den Entwicklungsprozess des Dashboards: von ersten Anforderungsanalysen über Wireframes und einen technischen Prototypen, einem Workshop in der letzten KI:edu.nrw-Themenreihe, Nutzungstests und Interviews mit Lehrenden bis hin zu einem umfangreichen Dashboard, das inzwischen allen Lehrenden an der RWTH zur Verfügung steht.

Lernziele

Nach dem Vortrag sind die Teilnehmenden in der Lage,

• die Herausforderungen von Prüfenden bei der Qualitätssicherung von Aufgaben zu nennen,

• verschiedene Methoden in der Entwicklung und Evaluation eines Dashboards zu beschreiben und

• die Potenziale und Grenzen der Aufgabenschwierigkeit als Kennzahl in der Qualitätssicherung von Prüfungen zu diskutieren.

Zielgruppe: Lehrende der Mitgliedshochschulen der DH.nrw

Referent*innen: Laura Platte, Martin Breuer aus dem Teilprojekt Learning Analytics

Eine Anmeldung ist bis unmittelbar vor Veranstaltungsbeginn möglich.

Veranstaltet wird die Themenreihe vom Projekt KI:edu.nrw.

Eine Übersicht über alle Themen und Termine finden Sie hier: https://ki-edu-nrw.rub.de/themenreihe2026

Juli 2026

KI als Lernpartner? (Aber richtig!)

Moderation

-

eTeam Digitalisierung

-

E-Mail

zfw-eteamdigi@rub.de

Wo?

- Online

Für wen?

In 60 Minuten schauen wir uns an, wie KI zum echten Sparringspartner wird: beim Strukturieren von Gedanken, beim Verstehen komplexer Inhalte oder beim Überarbeiten eigener Texte. Und genauso ehrlich sprechen wir darüber, wo die Grenzen liegen – zum Beispiel, wenn es ums Schreiben von Texten oder um eigenständige Literaturrecherche geht.

Celina vom eTeam Digitalisierung und Nadine Lordick aus dem KI:edu.nrw-Projekt des ZfW freuen sich über eure Anmeldungen!

Vorab noch Fragen? Dann stellt sie uns gerne unter zfw-eteamdigi@rub.de.

Neues zu KI in der Hochschulbildung in unserem Blog

Mit KI lernen: Lernrouten

Diskutieren, Austauschen, Reflektieren: KI und Learning Analytics in der Themenreihe 2026 von KI:edu.nrw

Einführungsveranstaltung: KI-Chatbots in Moodle testen

ZfW HÖRsaal: KI – was heißt das überhaupt? Im Gespräch über künstliche Intelligenz und KI-Kompetenz(en?) am ZfW

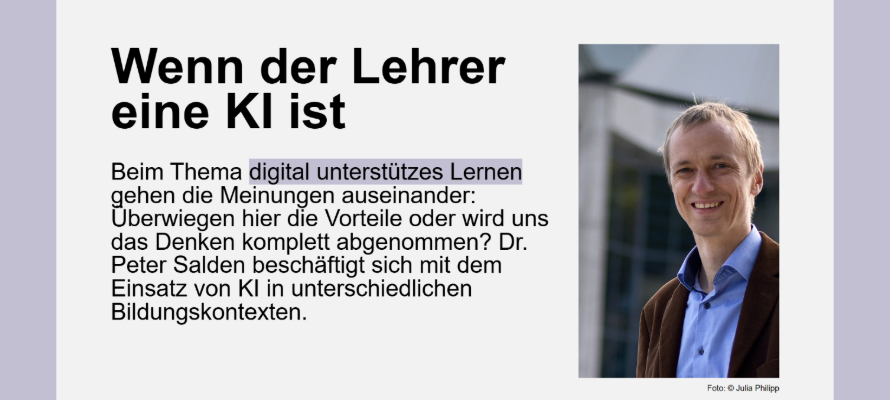

Stadtgold-Lesetipp: Wenn der Lehrer eine KI ist

Neues Online-Angebot: KI-Impulse für die Lehre

Wissenschaftsdidaktik zwischen Fjorden, Bergen und Weltkulturerbe

Lesetipp: KI ist keine Naturgewalt, die über uns hineinbricht

SAVE THE DATE: 5. Learning AID im September 2026 an der Ruhr-Universität Bochum

ZfW HÖRsaal: Quirlig, interdisziplinär, übergreifend – das ist die Learning AID

Statement „Uni & KI. Für ein Klima des Vertrauens“

Neues in Sachen KI aus der NRW-Landespolitik

Das war die vierte Learning AID – Rückblick auf die Tagung 2025

Schreiben mit „KI“

Rechtsgutachten von KI:edu.nrw zur Bedeutung der europäischen KI-Verordnung für Hochschulen veröffentlicht

GPT@RUB bietet jetzt KI-Modell mit Datenhoheit in NRW

Neues KI-Strategiepapier für die NRW-Hochschulen

Handlungsfähig trotz Unsicherheit beim Umgang mit KI. Ein Workshopkonzept

Learning AID 2025: Spannende Themen, interaktive Beteiligungsformate und viele Austauschmöglichkeiten

KI-Kompetenzen stärken: KI-NEL-24-NRW

Weiterführende Informationen

Kontakt

Kathrin Braungardt

eLearning (RUBeL)

Nadine Lordick

Schreibzentrum